TRUST OF AI

Trust Is the Real ROI of AI

Why scaling AI requires more than speed—it requires confidence.

AI is moving quickly from experimentation into day-to-day workflows: forecasting, pricing, customer interactions, finance, operations, and planning. Many teams can now produce compelling outputs in minutes. But there’s a consistent gap between impressive demos and repeatable business value. That value is Trust.

When people don’t trust AI, they hesitate to use it. When they trust it blindly, risk rises—because the costliest failures are rarely obvious. They’re the quiet, plausible mistakes that look reasonable and slip into decisions unnoticed.

In the AI era, trust isn’t a soft concept. It’s an operating requirement.

The trust gaps most organizations underestimate

AI outputs often sound confident. That creates two failure modes that block scale:

1. Under-trust (adoption failure)

Teams dismiss the tool, revert to old habits, and adoption stalls. This happens when results feel inconsistent, don’t reflect real constraints, or can’t be explained.

2. Over-trust (risk failure)

Teams treat outputs as authority. Review standards erode. Human judgment fades. Errors travel downstream because they appear acceptable on the surface—until consequences show up.

Healthy trust sits between these extremes: teams move faster with AI while keeping accountability and decision discipline intact.

Why trust is different with AI than traditional analytics

Traditional analytics tend to feel familiar: defined inputs, documented logic, stable outputs, and clear ownership.

AI adds complexity:

- Outputs can be probabilistic and variable.

- Limitations can be unclear unless explicitly designed.

- Data, prompts, and models change over time.

- Accountability can become diffused (“the model said so”).

Trust erodes when leaders and teams can’t answer basic questions:

- What inputs were used—and what definitions apply?

- What does the system not know?

- What guardrails were in place?

- Who approved this decision?

- How are we monitoring quality and drift?

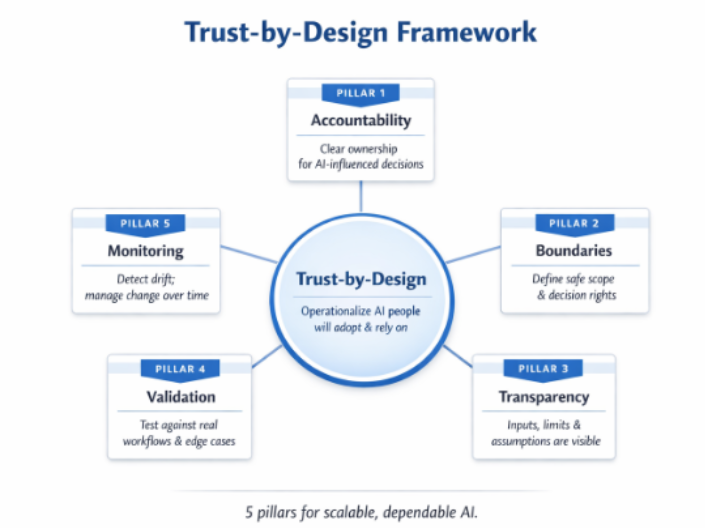

Trust-by-Design: a practical framework for scaling AI

At Ainfore., we treat trust as something you engineer into workflows—not something you assume. The goal is AI that creates value while remaining explainable enough for stakeholders and governed in proportion to risk.

Pillar 1: Accountability

Trust starts with ownership. Define who is responsible for:

- AI configuration (models, prompts, policies)

- Data definitions and refresh cadence

- Validation and approval steps

- Monitoring, incident response, and rollback

Trust starts with ownership; boundaries and transparency make expectations explicit; validation and monitoring keep trust stable over time.

Pillar 2: Boundaries and decision rights

Not every task deserves the same level of autonomy.

Establish explicit boundaries such as:

- Automate: low-risk, repeatable tasks with deterministic checks

- Assist: AI drafts; humans review

- Advise: AI proposes options; humans decide

- Restrict: enhanced controls required or AI not permitted

Boundaries reduce confusion and prevent accidental overreach.

Pillar 3: Transparency

Trust improves when people can see enough to use AI responsibly. Transparency should make clear:

- The inputs and definitions used

- Embedded assumptions and limitations

- What “confidence” means in the workflow

- How to escalate edge cases.

The goal isn’t perfect explainability. It’s sufficient clarity for responsible use.

Pillar 4: Validation in business reality

The question isn’t “Does it work on average?” It’s “Does it work when the business is messy?”.

Validation should include:

- Edge cases (outliers, exceptions, seasonality)

- Operational constraints (capacity, policy, SLAs)

- Failure scenarios (missing data, ambiguity)

- Human review protocols and acceptance criteria tied to outcomes.

The fastest way to lose trust is to validate in a lab and deploy it into reality.

Pillar 5: Monitoring and change management

AI systems drift: data changes, processes change, and models evolve. Monitoring should track:

- Input drift

- Output drift

- Outcome drift (business impact)

- Policy drift (risk posture and compliance).

Operationally: dashboards, thresholds, periodic evaluation, version control, and clear rollback paths.

Monitoring is what keeps trust stable over time.

What trust unlocks

When trust is operationalized, benefits compound:

- Adoption scales beyond early champions

- Decision velocity increases without losing accountability

- Risk decreases through earlier detection and controls

- Outcomes become repeatable across teams and functions

- Leadership gains confidence to expand AI responsibly

Trust is what converts AI capability into organizational capability.

A practical starting point

If you’re moving from pilots to production, start with three steps:

- Identify 3–5 high-value workflows where AI can assist in decision–making.

- Define decision rights and approval points before scaling.

- Implement validation and monitoring early so adoption doesn’t outpace trust.

About Ainfore

Ainfore. helps organizations design and scale AI-enabled decision workflows with trust built in—from use-case design and data readiness to governance, validation, and monitoring.